Forschung

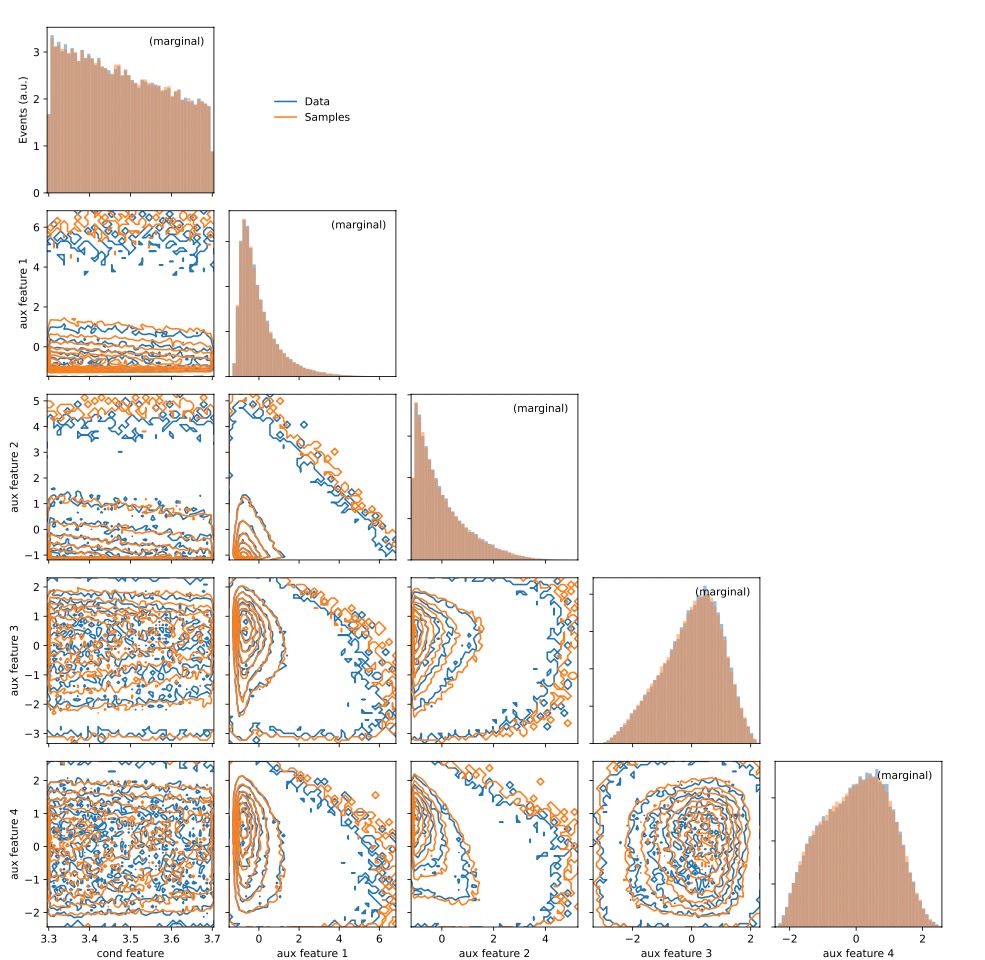

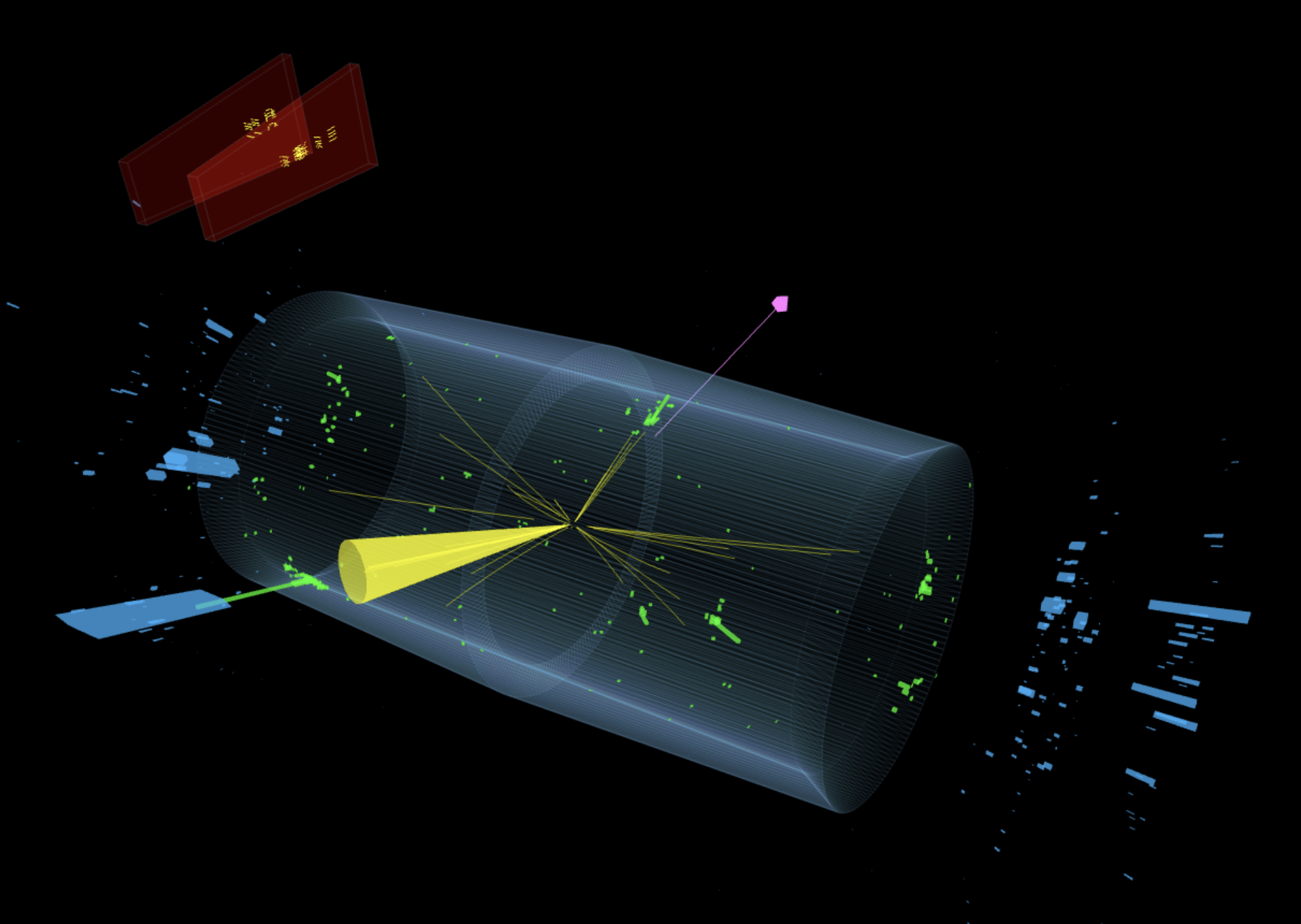

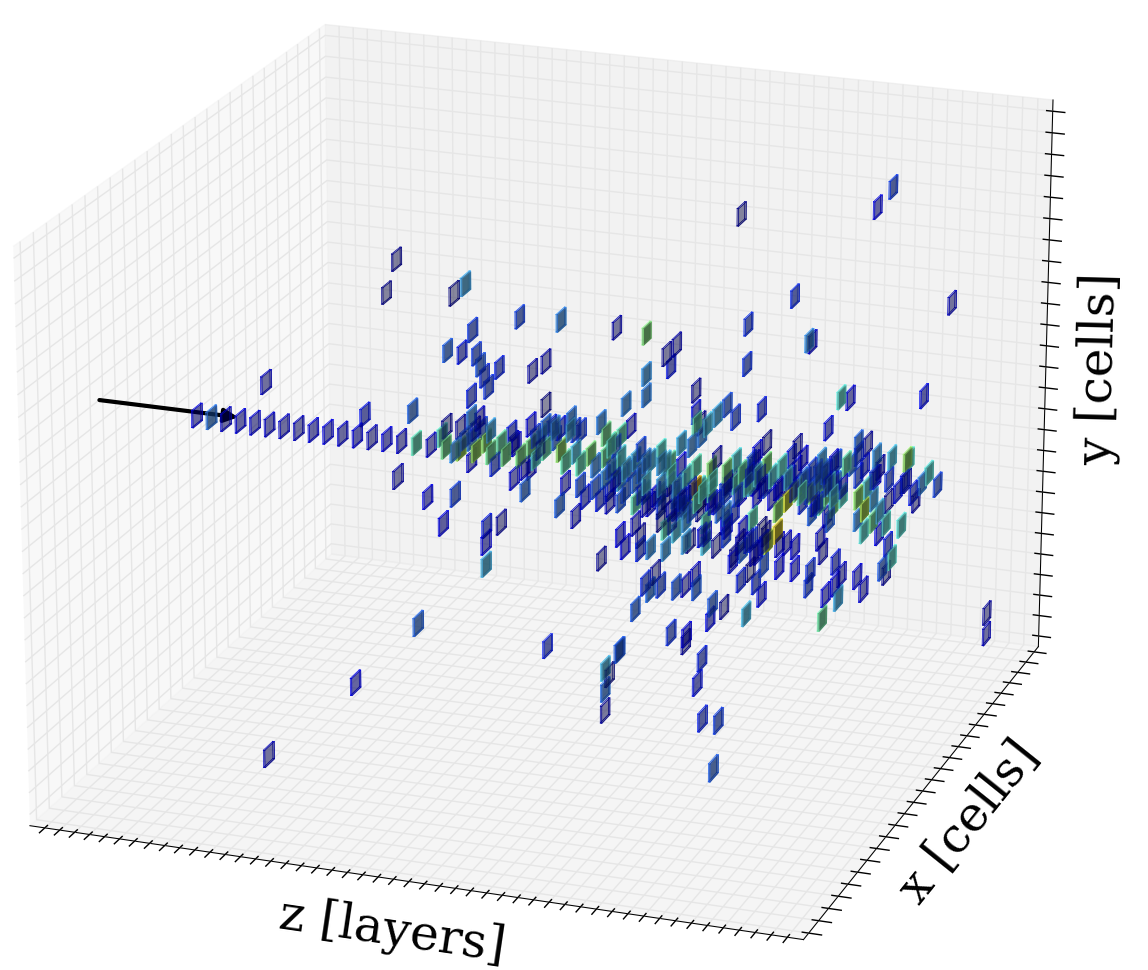

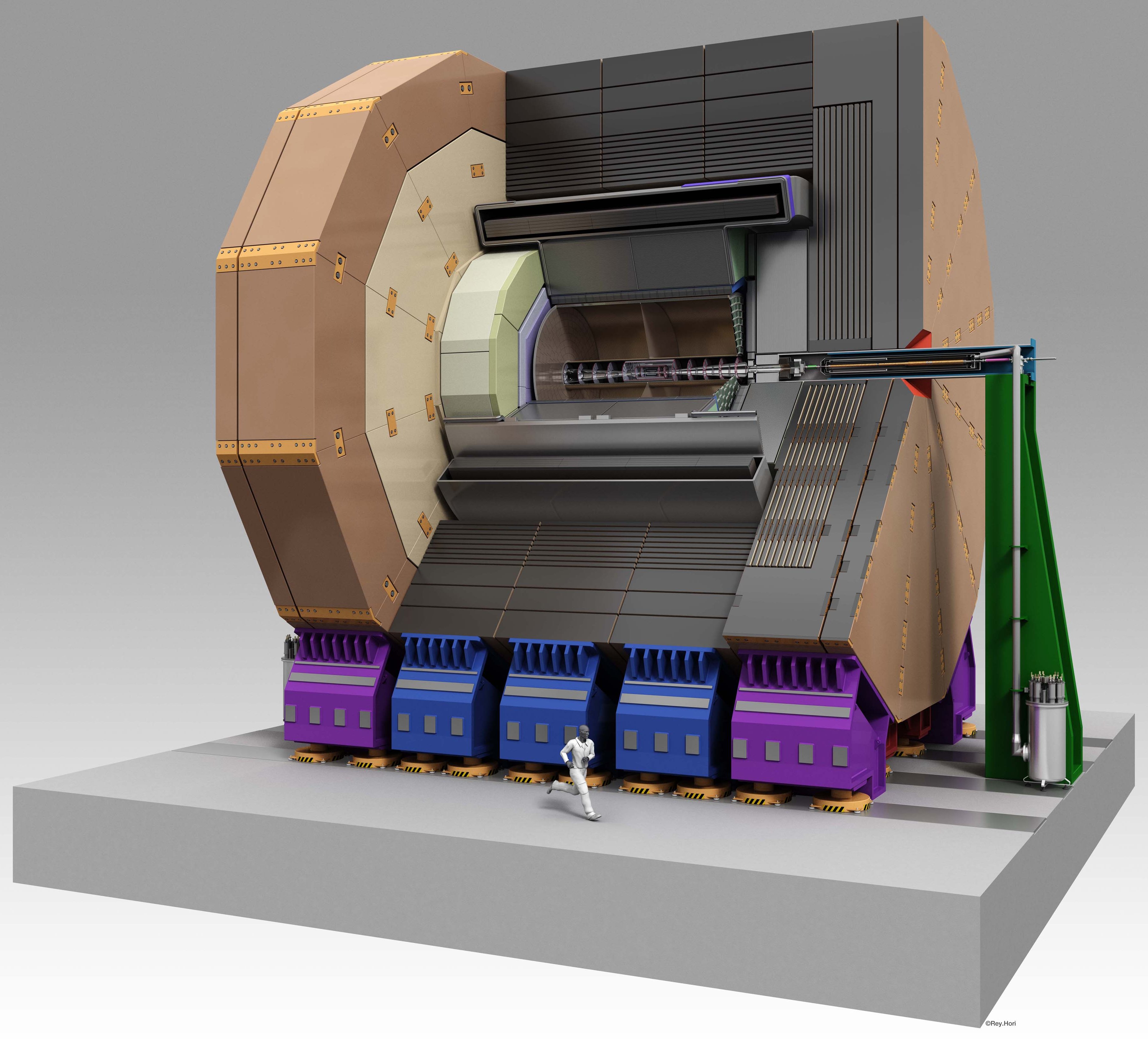

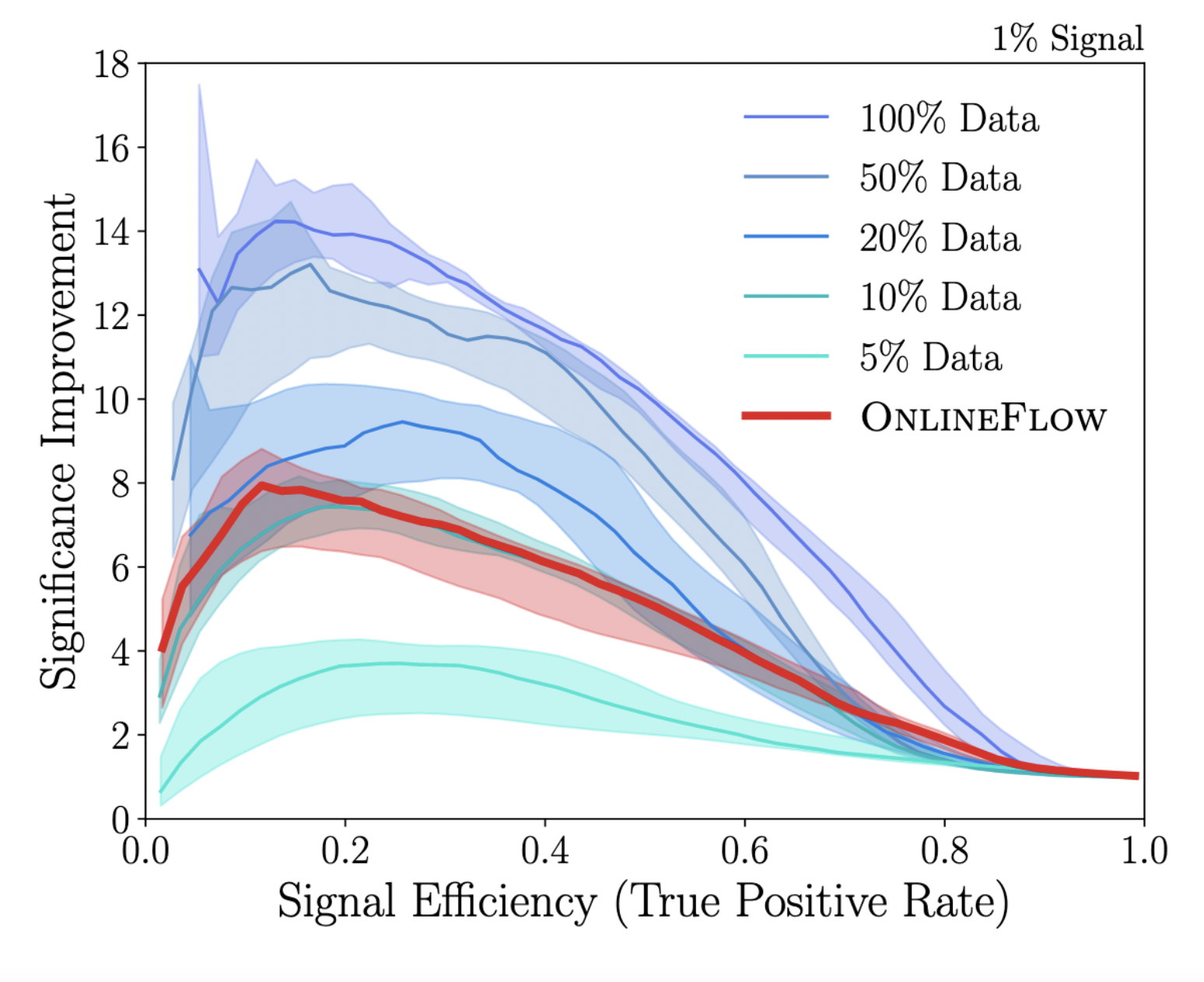

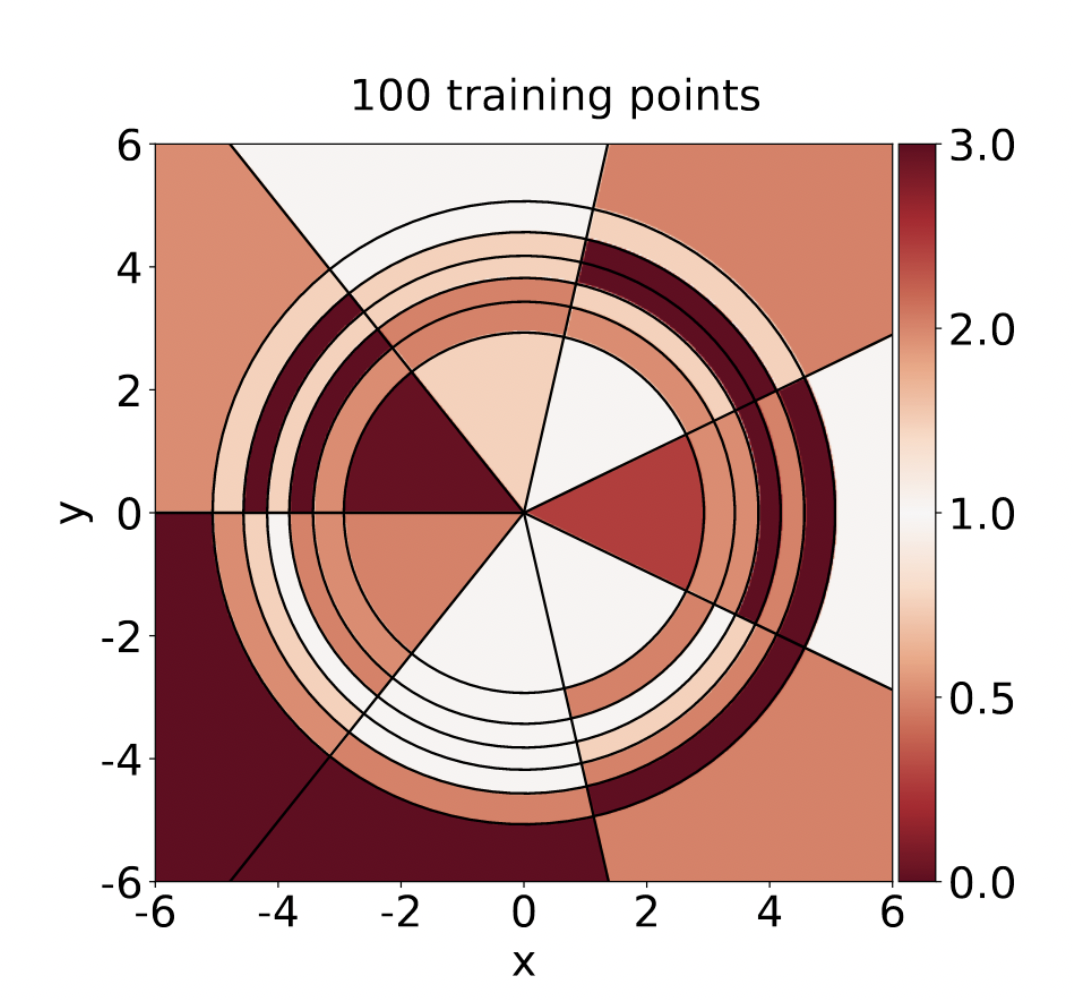

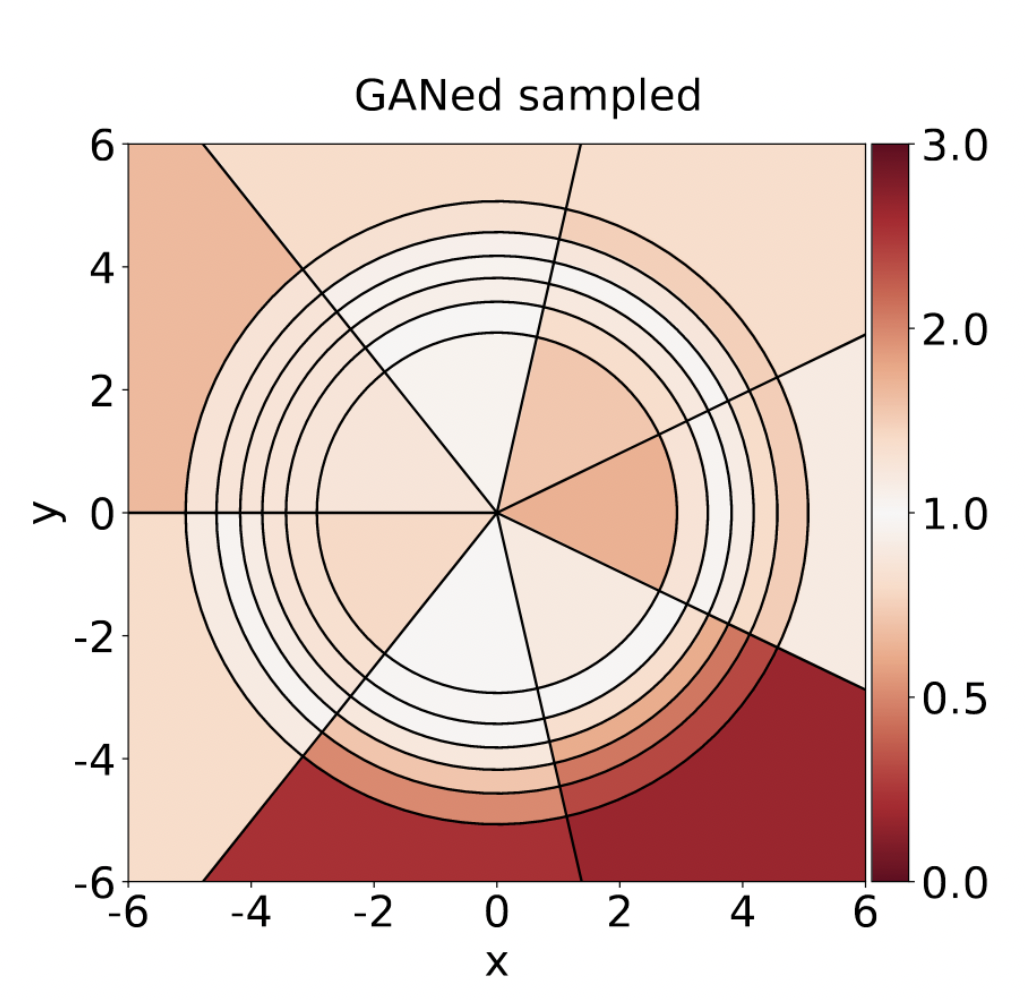

Our research covers the development of new algorithms for physics research based on machine learning and artificial intelligence as well as their application to searches for new physical phenomena in experimental particle physics at the LHC.

See below for more details or look at the publications and recent talks. If you are a student at Hamburg University, contact me (gregor.kasieczka"AT"uni-hamburg.de) for possible BSc and MSc projects in these areas. Open PhD/postdoc positions are listed here.

A good overview of our different research efforts is also provided by this poster: Link